Variational Autoencoder#

pimmslearn - INFO Experiment 03 - Analysis of latent spaces and performance comparisions

Papermill script parameters:

# files and folders

# Datasplit folder with data for experiment

folder_experiment: str = 'runs/example'

folder_data: str = '' # specify data directory if needed

file_format: str = 'csv' # file format of create splits, default pickle (pkl)

# Machine parsed metadata from rawfile workflow

fn_rawfile_metadata: str = 'data/dev_datasets/HeLa_6070/files_selected_metadata_N50.csv'

# training

epochs_max: int = 50 # Maximum number of epochs

batch_size: int = 64 # Batch size for training (and evaluation)

cuda: bool = True # Whether to use a GPU for training

# model

# Dimensionality of encoding dimension (latent space of model)

latent_dim: int = 25

# A underscore separated string of layers, '256_128' for the encoder, reverse will be use for decoder

hidden_layers: str = '256_128'

# force_train:bool = True # Force training when saved model could be used. Per default re-train model

patience: int = 50 # Patience for early stopping

sample_idx_position: int = 0 # position of index which is sample ID

model: str = 'VAE' # model name

model_key: str = 'VAE' # potentially alternative key for model (grid search)

save_pred_real_na: bool = True # Save all predictions for missing values

# metadata -> defaults for metadata extracted from machine data

meta_date_col: str = None # date column in meta data

meta_cat_col: str = None # category column in meta data

# Parameters

model = "VAE"

latent_dim = 10

batch_size = 64

epochs_max = 300

hidden_layers = "64"

sample_idx_position = 0

cuda = False

save_pred_real_na = True

fn_rawfile_metadata = "https://raw.githubusercontent.com/RasmussenLab/njab/HEAD/docs/tutorial/data/alzheimer/meta.csv"

folder_experiment = "runs/alzheimer_study"

model_key = "VAE"

Some argument transformations

{'folder_experiment': 'runs/alzheimer_study',

'folder_data': '',

'file_format': 'csv',

'fn_rawfile_metadata': 'https://raw.githubusercontent.com/RasmussenLab/njab/HEAD/docs/tutorial/data/alzheimer/meta.csv',

'epochs_max': 300,

'batch_size': 64,

'cuda': False,

'latent_dim': 10,

'hidden_layers': '64',

'patience': 50,

'sample_idx_position': 0,

'model': 'VAE',

'model_key': 'VAE',

'save_pred_real_na': True,

'meta_date_col': None,

'meta_cat_col': None}

{'batch_size': 64,

'cuda': False,

'data': Path('runs/alzheimer_study/data'),

'epochs_max': 300,

'file_format': 'csv',

'fn_rawfile_metadata': 'https://raw.githubusercontent.com/RasmussenLab/njab/HEAD/docs/tutorial/data/alzheimer/meta.csv',

'folder_data': '',

'folder_experiment': Path('runs/alzheimer_study'),

'hidden_layers': [64],

'latent_dim': 10,

'meta_cat_col': None,

'meta_date_col': None,

'model': 'VAE',

'model_key': 'VAE',

'out_figures': Path('runs/alzheimer_study/figures'),

'out_folder': Path('runs/alzheimer_study'),

'out_metrics': Path('runs/alzheimer_study'),

'out_models': Path('runs/alzheimer_study'),

'out_preds': Path('runs/alzheimer_study/preds'),

'patience': 50,

'sample_idx_position': 0,

'save_pred_real_na': True}

Some naming conventions

Load data in long format#

pimmslearn.io.datasplits - INFO Loaded 'train_X' from file: runs/alzheimer_study/data/train_X.csv

pimmslearn.io.datasplits - INFO Loaded 'val_y' from file: runs/alzheimer_study/data/val_y.csv

pimmslearn.io.datasplits - INFO Loaded 'test_y' from file: runs/alzheimer_study/data/test_y.csv

data is loaded in long format

Sample ID protein groups

Sample_077 H0Y5E4;H0YCV9;H0YD13;H0YDW7;P16070;P16070-10;P16070-11;P16070-12;P16070-13;P16070-14;P16070-15;P16070-16;P16070-17;P16070-18;P16070-3;P16070-4;P16070-5;P16070-6;P16070-7;P16070-8;P16070-9 19.957

Sample_055 K4DIA0;O75144;O75144-2 19.587

Sample_188 P13647 15.343

Sample_182 F6S8M0;H7C3P4;P15586;P15586-2 18.153

Sample_139 Q15904 20.459

Name: intensity, dtype: float64

Infer index names from long format

pimmslearn - INFO sample_id = 'Sample ID', single feature: index_column = 'protein groups'

load meta data for splits

| _collection site | _age at CSF collection | _gender | _t-tau [ng/L] | _p-tau [ng/L] | _Abeta-42 [ng/L] | _Abeta-40 [ng/L] | _Abeta-42/Abeta-40 ratio | _primary biochemical AD classification | _clinical AD diagnosis | _MMSE score | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Sample ID | |||||||||||

| Sample_000 | Sweden | 71.000 | f | 703.000 | 85.000 | 562.000 | NaN | NaN | biochemical control | NaN | NaN |

| Sample_001 | Sweden | 77.000 | m | 518.000 | 91.000 | 334.000 | NaN | NaN | biochemical AD | NaN | NaN |

| Sample_002 | Sweden | 75.000 | m | 974.000 | 87.000 | 515.000 | NaN | NaN | biochemical AD | NaN | NaN |

| Sample_003 | Sweden | 72.000 | f | 950.000 | 109.000 | 394.000 | NaN | NaN | biochemical AD | NaN | NaN |

| Sample_004 | Sweden | 63.000 | f | 873.000 | 88.000 | 234.000 | NaN | NaN | biochemical AD | NaN | NaN |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| Sample_205 | Berlin | 69.000 | f | 1,945.000 | NaN | 699.000 | 12,140.000 | 0.058 | biochemical AD | AD | 17.000 |

| Sample_206 | Berlin | 73.000 | m | 299.000 | NaN | 1,420.000 | 16,571.000 | 0.086 | biochemical control | non-AD | 28.000 |

| Sample_207 | Berlin | 71.000 | f | 262.000 | NaN | 639.000 | 9,663.000 | 0.066 | biochemical control | non-AD | 28.000 |

| Sample_208 | Berlin | 83.000 | m | 289.000 | NaN | 1,436.000 | 11,285.000 | 0.127 | biochemical control | non-AD | 24.000 |

| Sample_209 | Berlin | 63.000 | f | 591.000 | NaN | 1,299.000 | 11,232.000 | 0.116 | biochemical control | non-AD | 29.000 |

210 rows × 11 columns

Initialize Comparison#

replicates idea for truely missing values: Define truth as by using n=3 replicates to impute each sample

real test data:

Not used for predictions or early stopping.

[x] add some additional NAs based on distribution of data

protein groups

A0A024QZX5;A0A087X1N8;P35237 197

A0A024R0T9;K7ER74;P02655 208

A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 185

A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 208

A0A075B6H7 97

Name: freq, dtype: int64

Produce some addional simulated samples#

The validation simulated NA is used to by all models to evaluate training performance.

| observed | ||

|---|---|---|

| Sample ID | protein groups | |

| Sample_158 | Q9UN70;Q9UN70-2 | 14.630 |

| Sample_050 | Q9Y287 | 15.755 |

| Sample_107 | Q8N475;Q8N475-2 | 15.029 |

| Sample_199 | P06307 | 19.376 |

| Sample_067 | Q5VUB5 | 15.309 |

| ... | ... | ... |

| Sample_111 | F6SYF8;Q9UBP4 | 22.822 |

| Sample_002 | A0A0A0MT36 | 18.165 |

| Sample_049 | Q8WY21;Q8WY21-2;Q8WY21-3;Q8WY21-4 | 15.525 |

| Sample_182 | Q8NFT8 | 14.379 |

| Sample_123 | Q16853;Q16853-2 | 14.504 |

12600 rows × 1 columns

| observed | |

|---|---|

| count | 12,600.000 |

| mean | 16.339 |

| std | 2.741 |

| min | 7.209 |

| 25% | 14.412 |

| 50% | 15.935 |

| 75% | 17.910 |

| max | 30.140 |

Data in wide format#

Autoencoder need data in wide format

| protein groups | A0A024QZX5;A0A087X1N8;P35237 | A0A024R0T9;K7ER74;P02655 | A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | A0A075B6H7 | A0A075B6H9 | A0A075B6I0 | A0A075B6I1 | A0A075B6I6 | A0A075B6I9 | ... | Q9Y653;Q9Y653-2;Q9Y653-3 | Q9Y696 | Q9Y6C2 | Q9Y6N6 | Q9Y6N7;Q9Y6N7-2;Q9Y6N7-4 | Q9Y6R7 | Q9Y6X5 | Q9Y6Y8;Q9Y6Y8-2 | Q9Y6Y9 | S4R3U6 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Sample ID | |||||||||||||||||||||

| Sample_000 | 15.912 | 16.852 | 15.570 | 16.481 | 17.301 | 20.246 | 16.764 | 17.584 | 16.988 | 20.054 | ... | 16.012 | 15.178 | NaN | 15.050 | 16.842 | NaN | NaN | 19.563 | NaN | 12.805 |

| Sample_001 | NaN | 16.874 | 15.519 | 16.387 | NaN | 19.941 | 18.786 | 17.144 | NaN | 19.067 | ... | 15.528 | 15.576 | NaN | 14.833 | 16.597 | 20.299 | 15.556 | 19.386 | 13.970 | 12.442 |

| Sample_002 | 16.111 | NaN | 15.935 | 16.416 | 18.175 | 19.251 | 16.832 | 15.671 | 17.012 | 18.569 | ... | 15.229 | 14.728 | 13.757 | 15.118 | 17.440 | 19.598 | 15.735 | 20.447 | 12.636 | 12.505 |

| Sample_003 | 16.107 | 17.032 | 15.802 | 16.979 | 15.963 | 19.628 | 17.852 | 18.877 | 14.182 | 18.985 | ... | 15.495 | 14.590 | 14.682 | 15.140 | 17.356 | 19.429 | NaN | 20.216 | NaN | 12.445 |

| Sample_004 | 15.603 | 15.331 | 15.375 | 16.679 | NaN | 20.450 | 18.682 | 17.081 | 14.140 | 19.686 | ... | 14.757 | NaN | NaN | 15.256 | 17.075 | 19.582 | 15.328 | NaN | 13.145 | NaN |

5 rows × 1421 columns

Add interpolation performance#

Fill Validation data with potentially missing features#

| protein groups | A0A024QZX5;A0A087X1N8;P35237 | A0A024R0T9;K7ER74;P02655 | A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | A0A075B6H7 | A0A075B6H9 | A0A075B6I0 | A0A075B6I1 | A0A075B6I6 | A0A075B6I9 | ... | Q9Y653;Q9Y653-2;Q9Y653-3 | Q9Y696 | Q9Y6C2 | Q9Y6N6 | Q9Y6N7;Q9Y6N7-2;Q9Y6N7-4 | Q9Y6R7 | Q9Y6X5 | Q9Y6Y8;Q9Y6Y8-2 | Q9Y6Y9 | S4R3U6 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Sample ID | |||||||||||||||||||||

| Sample_000 | 15.912 | 16.852 | 15.570 | 16.481 | 17.301 | 20.246 | 16.764 | 17.584 | 16.988 | 20.054 | ... | 16.012 | 15.178 | NaN | 15.050 | 16.842 | NaN | NaN | 19.563 | NaN | 12.805 |

| Sample_001 | NaN | 16.874 | 15.519 | 16.387 | NaN | 19.941 | 18.786 | 17.144 | NaN | 19.067 | ... | 15.528 | 15.576 | NaN | 14.833 | 16.597 | 20.299 | 15.556 | 19.386 | 13.970 | 12.442 |

| Sample_002 | 16.111 | NaN | 15.935 | 16.416 | 18.175 | 19.251 | 16.832 | 15.671 | 17.012 | 18.569 | ... | 15.229 | 14.728 | 13.757 | 15.118 | 17.440 | 19.598 | 15.735 | 20.447 | 12.636 | 12.505 |

| Sample_003 | 16.107 | 17.032 | 15.802 | 16.979 | 15.963 | 19.628 | 17.852 | 18.877 | 14.182 | 18.985 | ... | 15.495 | 14.590 | 14.682 | 15.140 | 17.356 | 19.429 | NaN | 20.216 | NaN | 12.445 |

| Sample_004 | 15.603 | 15.331 | 15.375 | 16.679 | NaN | 20.450 | 18.682 | 17.081 | 14.140 | 19.686 | ... | 14.757 | NaN | NaN | 15.256 | 17.075 | 19.582 | 15.328 | NaN | 13.145 | NaN |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| Sample_205 | 15.682 | 16.886 | 14.910 | 16.482 | NaN | 17.705 | 17.039 | NaN | 16.413 | 19.102 | ... | NaN | 15.684 | 14.236 | 15.415 | 17.551 | 17.922 | 16.340 | 19.928 | 12.929 | NaN |

| Sample_206 | 15.798 | 17.554 | 15.600 | 15.938 | NaN | 18.154 | 18.152 | 16.503 | 16.860 | 18.538 | ... | 15.422 | 16.106 | NaN | 15.345 | 17.084 | 18.708 | NaN | 19.433 | NaN | NaN |

| Sample_207 | 15.739 | NaN | 15.469 | 16.898 | NaN | 18.636 | 17.950 | 16.321 | 16.401 | 18.849 | ... | 15.808 | 16.098 | 14.403 | 15.715 | NaN | 18.725 | 16.138 | 19.599 | 13.637 | 11.174 |

| Sample_208 | 15.477 | 16.779 | 14.995 | 16.132 | NaN | 14.908 | NaN | NaN | 16.119 | 18.368 | ... | 15.157 | 16.712 | NaN | 14.640 | 16.533 | 19.411 | 15.807 | 19.545 | NaN | NaN |

| Sample_209 | NaN | 17.261 | 15.175 | 16.235 | NaN | 17.893 | 17.744 | 16.371 | 15.780 | 18.806 | ... | 15.237 | 15.652 | 15.211 | 14.205 | 16.749 | 19.275 | 15.732 | 19.577 | 11.042 | 11.791 |

210 rows × 1421 columns

| protein groups | A0A024QZX5;A0A087X1N8;P35237 | A0A024R0T9;K7ER74;P02655 | A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | A0A075B6H7 | A0A075B6H9 | A0A075B6I0 | A0A075B6I1 | A0A075B6I6 | A0A075B6I9 | ... | Q9Y653;Q9Y653-2;Q9Y653-3 | Q9Y696 | Q9Y6C2 | Q9Y6N6 | Q9Y6N7;Q9Y6N7-2;Q9Y6N7-4 | Q9Y6R7 | Q9Y6X5 | Q9Y6Y8;Q9Y6Y8-2 | Q9Y6Y9 | S4R3U6 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Sample ID | |||||||||||||||||||||

| Sample_000 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | 19.863 | NaN | NaN | NaN | NaN |

| Sample_001 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_002 | NaN | 14.523 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_003 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_004 | NaN | NaN | NaN | NaN | 15.473 | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | 14.048 | NaN | NaN | NaN | NaN | 19.867 | NaN | 12.235 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| Sample_205 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | 11.802 |

| Sample_206 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_207 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_208 | NaN | NaN | NaN | NaN | NaN | NaN | 17.530 | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_209 | 15.727 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

210 rows × 1419 columns

| protein groups | A0A024QZX5;A0A087X1N8;P35237 | A0A024R0T9;K7ER74;P02655 | A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | A0A075B6H7 | A0A075B6H9 | A0A075B6I0 | A0A075B6I1 | A0A075B6I6 | A0A075B6I9 | ... | Q9Y653;Q9Y653-2;Q9Y653-3 | Q9Y696 | Q9Y6C2 | Q9Y6N6 | Q9Y6N7;Q9Y6N7-2;Q9Y6N7-4 | Q9Y6R7 | Q9Y6X5 | Q9Y6Y8;Q9Y6Y8-2 | Q9Y6Y9 | S4R3U6 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Sample ID | |||||||||||||||||||||

| Sample_000 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | 19.863 | NaN | NaN | NaN | NaN |

| Sample_001 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_002 | NaN | 14.523 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_003 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_004 | NaN | NaN | NaN | NaN | 15.473 | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | 14.048 | NaN | NaN | NaN | NaN | 19.867 | NaN | 12.235 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| Sample_205 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | 11.802 |

| Sample_206 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_207 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_208 | NaN | NaN | NaN | NaN | NaN | NaN | 17.530 | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Sample_209 | 15.727 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

210 rows × 1421 columns

Variational Autoencoder#

Analysis: DataLoaders, Model, transform#

Analysis: DataLoaders, Model#

VAE(

(encoder): Sequential(

(0): Linear(in_features=1421, out_features=64, bias=True)

(1): Dropout(p=0.2, inplace=False)

(2): BatchNorm1d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): LeakyReLU(negative_slope=0.1)

(4): Linear(in_features=64, out_features=20, bias=True)

)

(decoder): Sequential(

(0): Linear(in_features=10, out_features=64, bias=True)

(1): Dropout(p=0.2, inplace=False)

(2): BatchNorm1d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): LeakyReLU(negative_slope=0.1)

(4): Linear(in_features=64, out_features=2842, bias=True)

)

)

Training#

Start Fit

- before_fit : [TrainEvalCallback, Recorder, ProgressCallback, EarlyStoppingCallback]

Start Epoch Loop

- before_epoch : [Recorder, ProgressCallback]

Start Train

- before_train : [TrainEvalCallback, Recorder, ProgressCallback]

Start Batch Loop

- before_batch : [ModelAdapterVAE, CastToTensor]

- after_pred : [ModelAdapterVAE]

- after_loss : [ModelAdapterVAE]

- before_backward: []

- before_step : []

- after_step : []

- after_cancel_batch: []

- after_batch : [TrainEvalCallback, Recorder, ProgressCallback]

End Batch Loop

End Train

- after_cancel_train: [Recorder]

- after_train : [Recorder, ProgressCallback]

Start Valid

- before_validate: [TrainEvalCallback, Recorder, ProgressCallback]

Start Batch Loop

- **CBs same as train batch**: []

End Batch Loop

End Valid

- after_cancel_validate: [Recorder]

- after_validate : [Recorder, ProgressCallback]

End Epoch Loop

- after_cancel_epoch: []

- after_epoch : [Recorder, EarlyStoppingCallback]

End Fit

- after_cancel_fit: []

- after_fit : [ProgressCallback, EarlyStoppingCallback]

Adding a EarlyStoppingCallback results in an error. Potential fix in

PR3509 is not yet in

current version. Try again later

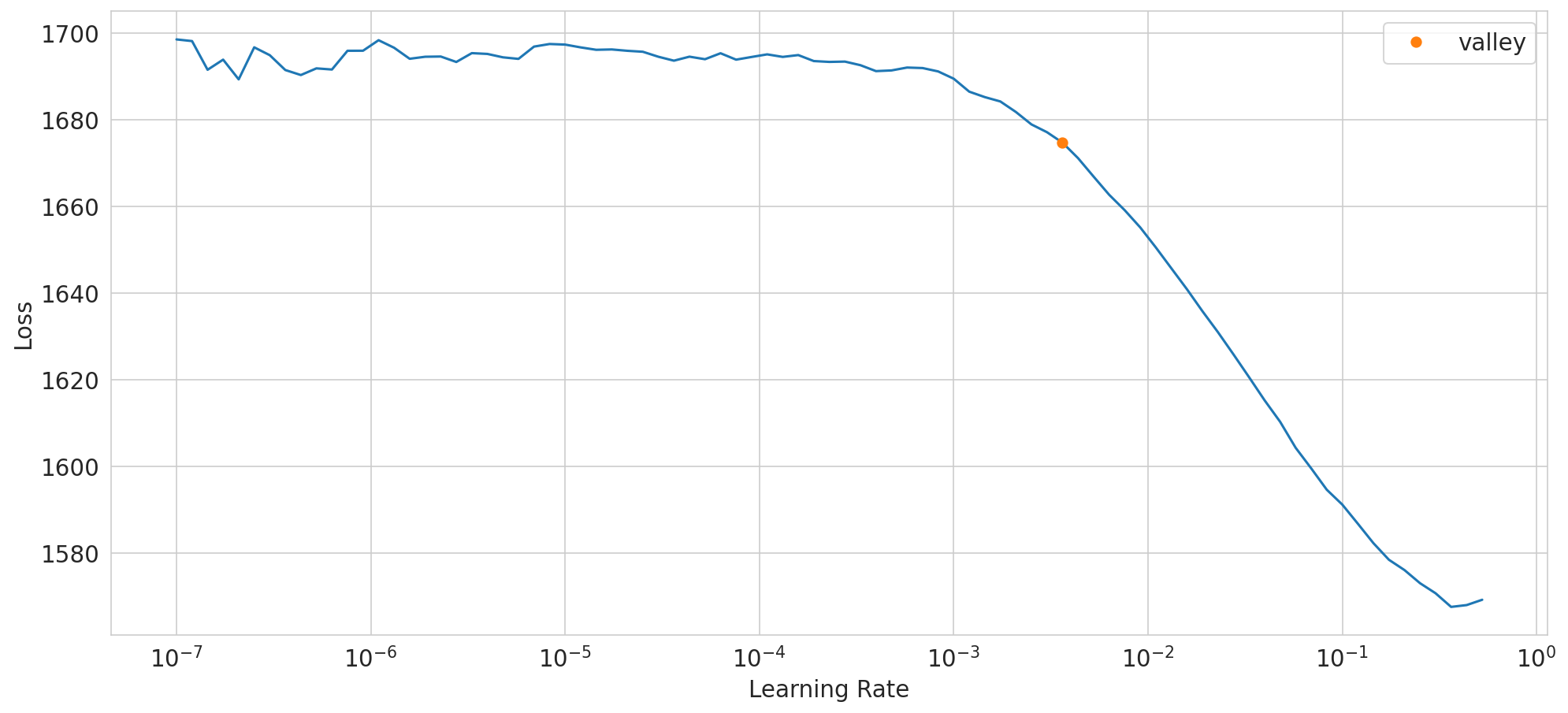

SuggestedLRs(valley=0.00363078061491251)

dump model config

| epoch | train_loss | valid_loss | time |

|---|---|---|---|

| 0 | 1691.338989 | 93.284309 | 00:00 |

| 1 | 1694.935181 | 94.120239 | 00:00 |

| 2 | 1693.476318 | 94.277519 | 00:00 |

| 3 | 1688.675781 | 95.055717 | 00:00 |

| 4 | 1684.217407 | 95.565231 | 00:00 |

| 5 | 1680.749390 | 95.311554 | 00:00 |

| 6 | 1677.090332 | 95.518379 | 00:00 |

| 7 | 1673.341675 | 95.470009 | 00:00 |

| 8 | 1668.986572 | 95.417801 | 00:00 |

| 9 | 1664.889771 | 95.675201 | 00:00 |

| 10 | 1660.648926 | 95.398727 | 00:00 |

| 11 | 1656.121826 | 95.217812 | 00:00 |

| 12 | 1649.528320 | 95.617752 | 00:00 |

| 13 | 1644.100342 | 95.221695 | 00:00 |

| 14 | 1637.495972 | 95.012856 | 00:00 |

| 15 | 1631.111450 | 94.997734 | 00:00 |

| 16 | 1624.852173 | 94.812172 | 00:00 |

| 17 | 1618.800171 | 94.772263 | 00:00 |

| 18 | 1611.479004 | 94.412094 | 00:00 |

| 19 | 1604.384033 | 94.142944 | 00:00 |

| 20 | 1596.532471 | 93.848213 | 00:00 |

| 21 | 1588.197510 | 93.751190 | 00:00 |

| 22 | 1579.560181 | 93.716026 | 00:00 |

| 23 | 1571.019043 | 93.867477 | 00:00 |

| 24 | 1560.528442 | 93.767868 | 00:00 |

| 25 | 1550.707642 | 94.265694 | 00:00 |

| 26 | 1539.965210 | 94.003326 | 00:00 |

| 27 | 1529.785400 | 93.902000 | 00:00 |

| 28 | 1518.473755 | 93.995125 | 00:00 |

| 29 | 1507.985718 | 93.816628 | 00:00 |

| 30 | 1498.336914 | 94.122383 | 00:00 |

| 31 | 1488.383911 | 93.963303 | 00:00 |

| 32 | 1478.874390 | 93.731239 | 00:00 |

| 33 | 1469.001343 | 93.704369 | 00:00 |

| 34 | 1459.065308 | 93.720451 | 00:00 |

| 35 | 1450.823730 | 94.081017 | 00:00 |

| 36 | 1440.387695 | 93.447823 | 00:00 |

| 37 | 1430.411011 | 93.479485 | 00:00 |

| 38 | 1418.962524 | 93.631592 | 00:00 |

| 39 | 1409.030396 | 94.117783 | 00:00 |

| 40 | 1398.834839 | 94.655060 | 00:00 |

| 41 | 1390.005615 | 94.385880 | 00:00 |

| 42 | 1381.869873 | 94.101784 | 00:00 |

| 43 | 1372.833130 | 94.055634 | 00:00 |

| 44 | 1363.454224 | 93.885567 | 00:00 |

| 45 | 1354.408081 | 93.405739 | 00:00 |

| 46 | 1347.405396 | 93.642700 | 00:00 |

| 47 | 1338.519653 | 93.214005 | 00:00 |

| 48 | 1330.838501 | 93.012627 | 00:00 |

| 49 | 1322.382935 | 93.288712 | 00:00 |

| 50 | 1314.060425 | 93.422943 | 00:00 |

| 51 | 1305.143311 | 93.119026 | 00:00 |

| 52 | 1299.443604 | 93.389877 | 00:00 |

| 53 | 1290.731934 | 93.431343 | 00:00 |

| 54 | 1283.108154 | 93.465004 | 00:00 |

| 55 | 1275.228760 | 93.199203 | 00:00 |

| 56 | 1267.513428 | 92.972969 | 00:00 |

| 57 | 1260.235352 | 93.345963 | 00:00 |

| 58 | 1252.195801 | 93.130249 | 00:00 |

| 59 | 1246.656372 | 92.795082 | 00:00 |

| 60 | 1240.382080 | 92.270836 | 00:00 |

| 61 | 1233.432617 | 91.869362 | 00:00 |

| 62 | 1227.860229 | 92.474640 | 00:00 |

| 63 | 1222.535645 | 92.998909 | 00:00 |

| 64 | 1215.958496 | 93.114639 | 00:00 |

| 65 | 1209.939697 | 92.617493 | 00:00 |

| 66 | 1203.956909 | 92.694855 | 00:00 |

| 67 | 1198.135254 | 92.403366 | 00:00 |

| 68 | 1192.092896 | 92.108070 | 00:00 |

| 69 | 1186.154663 | 91.914215 | 00:00 |

| 70 | 1184.010498 | 92.253746 | 00:00 |

| 71 | 1178.386475 | 92.085678 | 00:00 |

| 72 | 1173.211670 | 92.683044 | 00:00 |

| 73 | 1167.922241 | 92.330666 | 00:00 |

| 74 | 1165.605957 | 92.387360 | 00:00 |

| 75 | 1162.750000 | 92.716881 | 00:00 |

| 76 | 1158.671509 | 92.794868 | 00:00 |

| 77 | 1154.106445 | 92.275421 | 00:00 |

| 78 | 1151.134766 | 91.854477 | 00:00 |

| 79 | 1145.763672 | 91.599403 | 00:00 |

| 80 | 1142.354126 | 91.712036 | 00:00 |

| 81 | 1138.700562 | 91.678146 | 00:00 |

| 82 | 1134.806519 | 91.520737 | 00:00 |

| 83 | 1131.652100 | 92.280769 | 00:00 |

| 84 | 1128.975830 | 92.716644 | 00:00 |

| 85 | 1125.903931 | 93.336700 | 00:00 |

| 86 | 1123.163818 | 93.770470 | 00:00 |

| 87 | 1120.887451 | 92.911217 | 00:00 |

| 88 | 1117.699707 | 92.042419 | 00:00 |

| 89 | 1114.647217 | 92.267746 | 00:00 |

| 90 | 1113.437256 | 92.588501 | 00:00 |

| 91 | 1108.868408 | 92.275002 | 00:00 |

| 92 | 1106.293457 | 92.538887 | 00:00 |

| 93 | 1105.466309 | 92.887177 | 00:00 |

| 94 | 1103.627319 | 92.795021 | 00:00 |

| 95 | 1100.872070 | 92.818230 | 00:00 |

| 96 | 1100.651855 | 92.978165 | 00:00 |

| 97 | 1098.971313 | 92.894585 | 00:00 |

| 98 | 1096.512451 | 92.770973 | 00:00 |

| 99 | 1093.480835 | 92.673164 | 00:00 |

| 100 | 1093.447144 | 92.371826 | 00:00 |

| 101 | 1092.712036 | 92.463867 | 00:00 |

| 102 | 1092.046143 | 92.402382 | 00:00 |

| 103 | 1089.619873 | 92.375587 | 00:00 |

| 104 | 1091.259888 | 92.883286 | 00:00 |

| 105 | 1089.208496 | 93.026413 | 00:00 |

| 106 | 1087.265869 | 93.306175 | 00:00 |

| 107 | 1084.742676 | 93.119804 | 00:00 |

| 108 | 1082.698730 | 93.029793 | 00:00 |

| 109 | 1078.781250 | 92.693672 | 00:00 |

| 110 | 1078.068604 | 92.761566 | 00:00 |

| 111 | 1076.166748 | 93.500595 | 00:00 |

| 112 | 1075.657104 | 93.282394 | 00:00 |

| 113 | 1073.934326 | 93.790260 | 00:00 |

| 114 | 1071.749512 | 93.351761 | 00:00 |

| 115 | 1069.419434 | 93.710083 | 00:00 |

| 116 | 1069.230591 | 93.129936 | 00:00 |

| 117 | 1069.390137 | 93.802406 | 00:00 |

| 118 | 1067.713013 | 93.215652 | 00:00 |

| 119 | 1066.970947 | 93.573296 | 00:00 |

| 120 | 1064.930176 | 93.706917 | 00:00 |

| 121 | 1063.211914 | 93.777565 | 00:00 |

| 122 | 1062.055908 | 93.071968 | 00:00 |

| 123 | 1060.126831 | 92.679176 | 00:00 |

| 124 | 1059.712402 | 92.877518 | 00:00 |

| 125 | 1058.874756 | 92.967842 | 00:00 |

| 126 | 1059.032959 | 93.019684 | 00:00 |

| 127 | 1059.105225 | 92.983780 | 00:00 |

| 128 | 1057.219360 | 93.261261 | 00:00 |

| 129 | 1055.956421 | 94.654556 | 00:00 |

| 130 | 1054.487427 | 94.312889 | 00:00 |

| 131 | 1053.736084 | 93.269669 | 00:00 |

| 132 | 1053.795410 | 92.921295 | 00:00 |

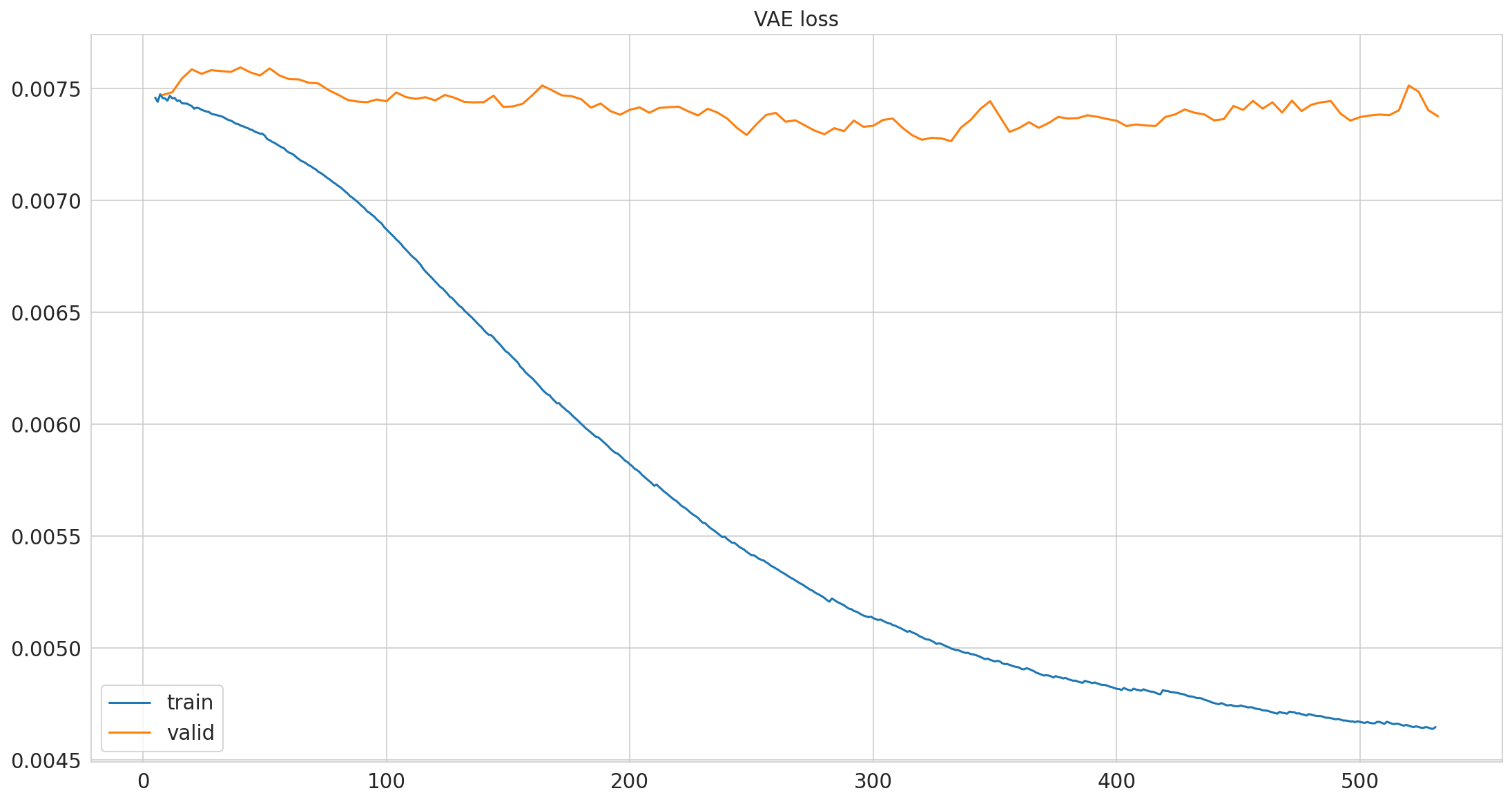

No improvement since epoch 82: early stopping

Save number of actually trained epochs

133

Loss normalized by total number of measurements#

pimmslearn.plotting - INFO Saved Figures to runs/alzheimer_study/figures/vae_training

Predictions#

create predictions and select validation data predictions

Sample ID protein groups

Sample_000 A0A024QZX5;A0A087X1N8;P35237 15.952

A0A024R0T9;K7ER74;P02655 16.852

A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 15.828

A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 16.721

A0A075B6H7 16.963

...

Sample_209 Q9Y6R7 19.120

Q9Y6X5 15.494

Q9Y6Y8;Q9Y6Y8-2 19.302

Q9Y6Y9 11.906

S4R3U6 11.408

Length: 298410, dtype: float32

| observed | VAE | ||

|---|---|---|---|

| Sample ID | protein groups | ||

| Sample_158 | Q9UN70;Q9UN70-2 | 14.630 | 15.809 |

| Sample_050 | Q9Y287 | 15.755 | 16.745 |

| Sample_107 | Q8N475;Q8N475-2 | 15.029 | 14.855 |

| Sample_199 | P06307 | 19.376 | 18.962 |

| Sample_067 | Q5VUB5 | 15.309 | 14.887 |

| ... | ... | ... | ... |

| Sample_111 | F6SYF8;Q9UBP4 | 22.822 | 22.788 |

| Sample_002 | A0A0A0MT36 | 18.165 | 15.909 |

| Sample_049 | Q8WY21;Q8WY21-2;Q8WY21-3;Q8WY21-4 | 15.525 | 15.770 |

| Sample_182 | Q8NFT8 | 14.379 | 13.472 |

| Sample_123 | Q16853;Q16853-2 | 14.504 | 14.590 |

12600 rows × 2 columns

| observed | VAE | ||

|---|---|---|---|

| Sample ID | protein groups | ||

| Sample_000 | A0A075B6P5;P01615 | 17.016 | 17.000 |

| A0A087X089;Q16627;Q16627-2 | 18.280 | 18.048 | |

| A0A0B4J2B5;S4R460 | 21.735 | 22.254 | |

| A0A140T971;O95865;Q5SRR8;Q5SSV3 | 14.603 | 15.171 | |

| A0A140TA33;A0A140TA41;A0A140TA52;P22105;P22105-3;P22105-4 | 16.143 | 16.614 | |

| ... | ... | ... | ... |

| Sample_209 | Q96ID5 | 16.074 | 15.919 |

| Q9H492;Q9H492-2 | 13.173 | 13.252 | |

| Q9HC57 | 14.207 | 14.405 | |

| Q9NPH3;Q9NPH3-2;Q9NPH3-5 | 14.962 | 15.150 | |

| Q9UGM5;Q9UGM5-2 | 16.871 | 16.542 |

12600 rows × 2 columns

save missing values predictions

Sample ID protein groups

Sample_000 A0A075B6J9 15.543

A0A075B6Q5 16.004

A0A075B6R2 16.716

A0A075B6S5 16.218

A0A087WSY4 16.244

...

Sample_209 Q9P1W8;Q9P1W8-2;Q9P1W8-4 15.949

Q9UI40;Q9UI40-2 16.280

Q9UIW2 16.678

Q9UMX0;Q9UMX0-2;Q9UMX0-4 13.118

Q9UP79 15.973

Name: intensity, Length: 46401, dtype: float32

Plots#

validation data

| latent dimension 1 | latent dimension 2 | latent dimension 3 | latent dimension 4 | latent dimension 5 | latent dimension 6 | latent dimension 7 | latent dimension 8 | latent dimension 9 | latent dimension 10 | |

|---|---|---|---|---|---|---|---|---|---|---|

| Sample ID | ||||||||||

| Sample_000 | -1.068 | 1.363 | 2.057 | -0.254 | -2.342 | -2.372 | 0.390 | -0.662 | -0.785 | 0.868 |

| Sample_001 | -1.739 | 1.447 | 1.584 | -0.321 | -1.814 | -1.610 | 0.843 | -0.053 | 0.729 | 0.407 |

| Sample_002 | -0.373 | 0.380 | 1.815 | -0.923 | -1.882 | 0.149 | 1.880 | 0.388 | -1.020 | 0.494 |

| Sample_003 | -0.410 | 0.307 | 2.244 | 0.027 | -2.552 | -1.832 | 1.174 | 0.559 | -1.329 | 0.203 |

| Sample_004 | -1.623 | 0.033 | 1.979 | -0.100 | -1.309 | -1.533 | 0.506 | 0.700 | -0.859 | 0.119 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| Sample_205 | 0.942 | 0.793 | 1.981 | 1.784 | -1.460 | 0.416 | 0.406 | 0.706 | -0.226 | 1.156 |

| Sample_206 | 0.423 | 1.795 | 0.604 | -1.130 | 0.998 | 1.664 | -0.039 | -1.380 | 0.737 | 1.692 |

| Sample_207 | -1.058 | 1.586 | 0.372 | 0.462 | -0.120 | 0.123 | -0.939 | 0.585 | -1.428 | 1.364 |

| Sample_208 | -1.588 | 2.046 | 1.773 | 0.548 | 0.203 | 1.090 | -1.343 | -0.256 | 0.977 | 0.258 |

| Sample_209 | -0.785 | 1.289 | 1.066 | -0.520 | 0.827 | 1.674 | -0.230 | 1.592 | -1.349 | -0.689 |

210 rows × 10 columns

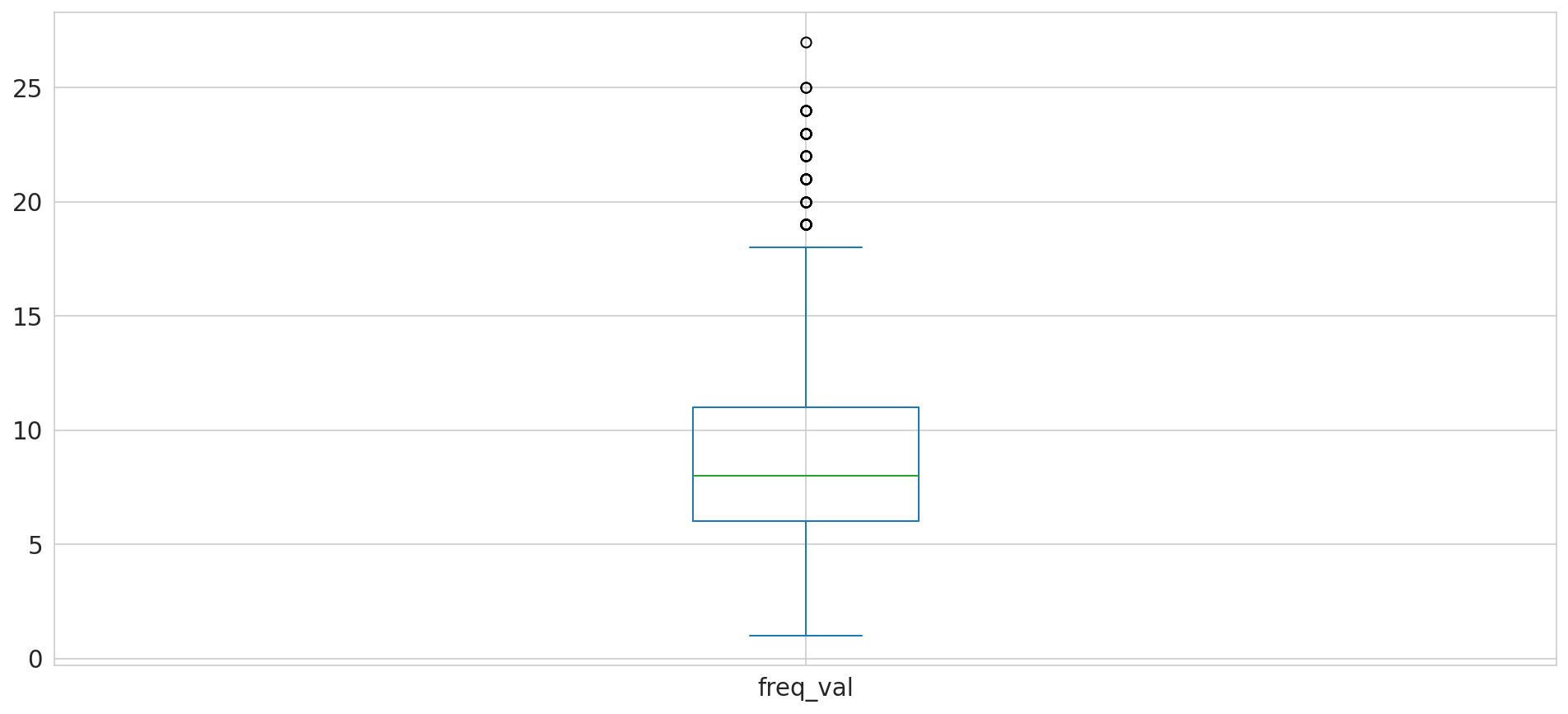

freq_val

1 12

2 18

3 50

4 82

5 108

Name: count, dtype: int64

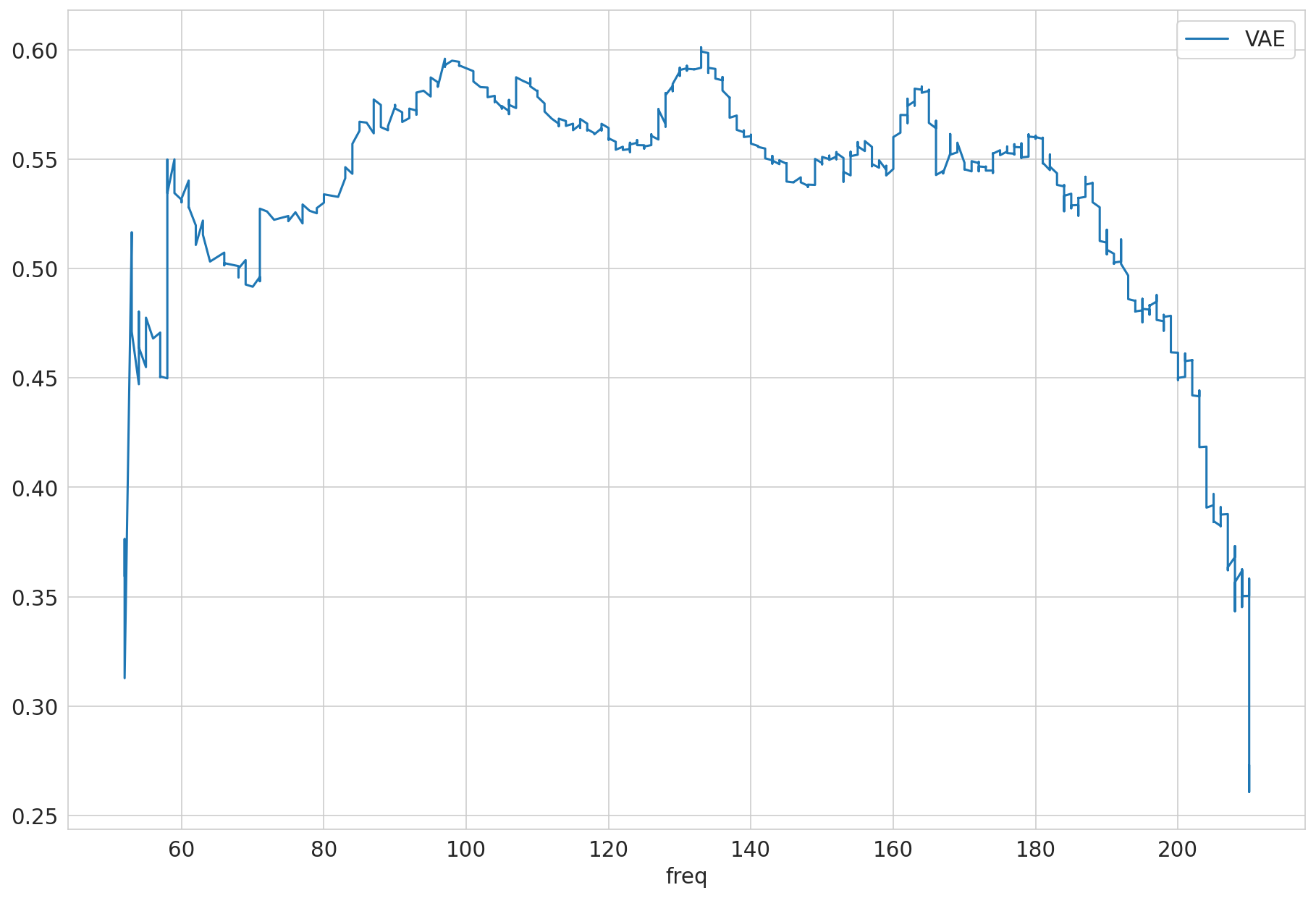

| VAE | ||

|---|---|---|

| mean | count | |

| protein groups | ||

| A0A024QZX5;A0A087X1N8;P35237 | 0.160 | 7 |

| A0A024R0T9;K7ER74;P02655 | 1.268 | 4 |

| A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | 0.279 | 9 |

| A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | 0.301 | 6 |

| A0A075B6H7 | 0.503 | 6 |

| ... | ... | ... |

| Q9Y6R7 | 0.380 | 10 |

| Q9Y6X5 | 0.331 | 7 |

| Q9Y6Y8;Q9Y6Y8-2 | 0.326 | 9 |

| Q9Y6Y9 | 0.452 | 15 |

| S4R3U6 | 0.511 | 24 |

1419 rows × 2 columns

| VAE | ||

|---|---|---|

| Sample ID | protein groups | |

| Sample_158 | Q9UN70;Q9UN70-2 | 1.178 |

| Sample_050 | Q9Y287 | 0.990 |

| Sample_107 | Q8N475;Q8N475-2 | -0.175 |

| Sample_199 | P06307 | -0.414 |

| Sample_067 | Q5VUB5 | -0.422 |

| ... | ... | ... |

| Sample_111 | F6SYF8;Q9UBP4 | -0.034 |

| Sample_002 | A0A0A0MT36 | -2.256 |

| Sample_049 | Q8WY21;Q8WY21-2;Q8WY21-3;Q8WY21-4 | 0.245 |

| Sample_182 | Q8NFT8 | -0.907 |

| Sample_123 | Q16853;Q16853-2 | 0.085 |

12600 rows × 1 columns

Comparisons#

Simulated NAs : Artificially created NAs. Some data was sampled and set explicitly to misssing before it was fed to the model for reconstruction.

Validation data#

all measured (identified, observed) peptides in validation data

The simulated NA for the validation step are real test data (not used for training nor early stopping)

Selected as truth to compare to: observed

{'VAE': {'MSE': 0.4574701866904295,

'MAE': 0.4312313042749696,

'N': 12600,

'prop': 1.0}}

Test Datasplit#

Selected as truth to compare to: observed

{'VAE': {'MSE': 0.48066541670815066,

'MAE': 0.4364533778446176,

'N': 12600,

'prop': 1.0}}

Save all metrics as json

{ 'test_simulated_na': { 'VAE': { 'MAE': 0.4364533778446176,

'MSE': 0.48066541670815066,

'N': 12600,

'prop': 1.0}},

'valid_simulated_na': { 'VAE': { 'MAE': 0.4312313042749696,

'MSE': 0.4574701866904295,

'N': 12600,

'prop': 1.0}}}

| subset | valid_simulated_na | test_simulated_na | |

|---|---|---|---|

| model | metric_name | ||

| VAE | MSE | 0.457 | 0.481 |

| MAE | 0.431 | 0.436 | |

| N | 12,600.000 | 12,600.000 | |

| prop | 1.000 | 1.000 |

Save predictions#

Config#

{}

{'M': 1421,

'batch_size': 64,

'cuda': False,

'data': Path('runs/alzheimer_study/data'),

'epoch_trained': 133,

'epochs_max': 300,

'file_format': 'csv',

'fn_rawfile_metadata': 'https://raw.githubusercontent.com/RasmussenLab/njab/HEAD/docs/tutorial/data/alzheimer/meta.csv',

'folder_data': '',

'folder_experiment': Path('runs/alzheimer_study'),

'hidden_layers': [64],

'latent_dim': 10,

'meta_cat_col': None,

'meta_date_col': None,

'model': 'VAE',

'model_key': 'VAE',

'n_params': 277998,

'out_figures': Path('runs/alzheimer_study/figures'),

'out_folder': Path('runs/alzheimer_study'),

'out_metrics': Path('runs/alzheimer_study'),

'out_models': Path('runs/alzheimer_study'),

'out_preds': Path('runs/alzheimer_study/preds'),

'patience': 50,

'sample_idx_position': 0,

'save_pred_real_na': True}