Compare outcomes from differential analysis based on different imputation methods#

load scores based on

10_1_ald_diff_analysis

Parameters#

Default and set parameters for the notebook.

folder_experiment = 'runs/appl_ald_data/plasma/proteinGroups'

target = 'kleiner'

model_key = 'VAE'

baseline = 'RSN'

out_folder = 'diff_analysis'

selected_statistics = ['p-unc', '-Log10 pvalue', 'qvalue', 'rejected']

disease_ontology = 5082 # code from https://disease-ontology.org/

# split diseases notebook? Query gene names for proteins in file from uniprot?

annotaitons_gene_col = 'PG.Genes'

# Parameters

disease_ontology = 10652

folder_experiment = "runs/alzheimer_study"

target = "AD"

baseline = "PI"

model_key = "Median"

out_folder = "diff_analysis"

annotaitons_gene_col = "None"

Add set parameters to configuration

root - INFO Removed from global namespace: folder_experiment

root - INFO Removed from global namespace: target

root - INFO Removed from global namespace: model_key

root - INFO Removed from global namespace: baseline

root - INFO Removed from global namespace: out_folder

root - INFO Removed from global namespace: selected_statistics

root - INFO Removed from global namespace: disease_ontology

root - INFO Removed from global namespace: annotaitons_gene_col

root - INFO Already set attribute: folder_experiment has value runs/alzheimer_study

root - INFO Already set attribute: out_folder has value diff_analysis

{'annotaitons_gene_col': 'None',

'baseline': 'PI',

'data': PosixPath('runs/alzheimer_study/data'),

'disease_ontology': 10652,

'folder_experiment': PosixPath('runs/alzheimer_study'),

'freq_features_observed': PosixPath('runs/alzheimer_study/freq_features_observed.csv'),

'model_key': 'Median',

'out_figures': PosixPath('runs/alzheimer_study/figures'),

'out_folder': PosixPath('runs/alzheimer_study/diff_analysis/AD/PI_vs_Median'),

'out_metrics': PosixPath('runs/alzheimer_study'),

'out_models': PosixPath('runs/alzheimer_study'),

'out_preds': PosixPath('runs/alzheimer_study/preds'),

'scores_folder': PosixPath('runs/alzheimer_study/diff_analysis/AD/scores'),

'selected_statistics': ['p-unc', '-Log10 pvalue', 'qvalue', 'rejected'],

'target': 'AD'}

Excel file for exports#

files_out = dict()

writer_args = dict(float_format='%.3f')

fname = args.out_folder / 'diff_analysis_compare_methods.xlsx'

files_out[fname.name] = fname

writer = pd.ExcelWriter(fname)

logger.info("Writing to excel file: %s", fname)

root - INFO Writing to excel file: runs/alzheimer_study/diff_analysis/AD/PI_vs_Median/diff_analysis_compare_methods.xlsx

Load scores#

Load baseline model scores#

Show all statistics, later use selected statistics

| model | PI | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| var | SS | DF | F | p-unc | np2 | -Log10 pvalue | qvalue | rejected | |

| protein groups | Source | ||||||||

| A0A024QZX5;A0A087X1N8;P35237 | AD | 0.274 | 1 | 0.399 | 0.528 | 0.002 | 0.277 | 0.670 | False |

| age | 0.212 | 1 | 0.309 | 0.579 | 0.002 | 0.237 | 0.712 | False | |

| Kiel | 2.676 | 1 | 3.897 | 0.050 | 0.020 | 1.303 | 0.121 | False | |

| Magdeburg | 5.765 | 1 | 8.393 | 0.004 | 0.042 | 2.376 | 0.016 | True | |

| Sweden | 8.898 | 1 | 12.955 | 0.000 | 0.064 | 3.391 | 0.002 | True | |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| S4R3U6 | AD | 0.074 | 1 | 0.070 | 0.791 | 0.000 | 0.102 | 0.872 | False |

| age | 0.829 | 1 | 0.785 | 0.377 | 0.004 | 0.424 | 0.538 | False | |

| Kiel | 0.040 | 1 | 0.038 | 0.846 | 0.000 | 0.073 | 0.909 | False | |

| Magdeburg | 3.442 | 1 | 3.263 | 0.072 | 0.017 | 1.140 | 0.163 | False | |

| Sweden | 6.688 | 1 | 6.340 | 0.013 | 0.032 | 1.899 | 0.041 | True | |

7105 rows × 8 columns

Load selected comparison model scores#

| model | Median | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| var | SS | DF | F | p-unc | np2 | -Log10 pvalue | qvalue | rejected | |

| protein groups | Source | ||||||||

| A0A024QZX5;A0A087X1N8;P35237 | AD | 0.830 | 1 | 6.377 | 0.012 | 0.032 | 1.907 | 0.039 | True |

| age | 0.001 | 1 | 0.006 | 0.939 | 0.000 | 0.027 | 0.966 | False | |

| Kiel | 0.106 | 1 | 0.815 | 0.368 | 0.004 | 0.435 | 0.532 | False | |

| Magdeburg | 0.219 | 1 | 1.680 | 0.197 | 0.009 | 0.707 | 0.343 | False | |

| Sweden | 1.101 | 1 | 8.461 | 0.004 | 0.042 | 2.392 | 0.016 | True | |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| S4R3U6 | AD | 0.051 | 1 | 0.119 | 0.730 | 0.001 | 0.136 | 0.829 | False |

| age | 1.214 | 1 | 2.845 | 0.093 | 0.015 | 1.030 | 0.194 | False | |

| Kiel | 0.861 | 1 | 2.018 | 0.157 | 0.010 | 0.804 | 0.289 | False | |

| Magdeburg | 0.216 | 1 | 0.506 | 0.478 | 0.003 | 0.321 | 0.631 | False | |

| Sweden | 3.965 | 1 | 9.288 | 0.003 | 0.046 | 2.580 | 0.011 | True | |

7105 rows × 8 columns

Combined scores#

show only selected statistics for comparsion

| model | Median | PI | |||||||

|---|---|---|---|---|---|---|---|---|---|

| var | p-unc | -Log10 pvalue | qvalue | rejected | p-unc | -Log10 pvalue | qvalue | rejected | |

| protein groups | Source | ||||||||

| A0A024QZX5;A0A087X1N8;P35237 | AD | 0.012 | 1.907 | 0.039 | True | 0.528 | 0.277 | 0.670 | False |

| Kiel | 0.368 | 0.435 | 0.532 | False | 0.050 | 1.303 | 0.121 | False | |

| Magdeburg | 0.197 | 0.707 | 0.343 | False | 0.004 | 2.376 | 0.016 | True | |

| Sweden | 0.004 | 2.392 | 0.016 | True | 0.000 | 3.391 | 0.002 | True | |

| age | 0.939 | 0.027 | 0.966 | False | 0.579 | 0.237 | 0.712 | False | |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| S4R3U6 | AD | 0.730 | 0.136 | 0.829 | False | 0.791 | 0.102 | 0.872 | False |

| Kiel | 0.157 | 0.804 | 0.289 | False | 0.846 | 0.073 | 0.909 | False | |

| Magdeburg | 0.478 | 0.321 | 0.631 | False | 0.072 | 1.140 | 0.163 | False | |

| Sweden | 0.003 | 2.580 | 0.011 | True | 0.013 | 1.899 | 0.041 | True | |

| age | 0.093 | 1.030 | 0.194 | False | 0.377 | 0.424 | 0.538 | False | |

7105 rows × 8 columns

Models in comparison (name mapping)

{'Median': 'Median', 'PI': 'PI'}

Describe scores#

| model | Median | PI | ||||

|---|---|---|---|---|---|---|

| var | p-unc | -Log10 pvalue | qvalue | p-unc | -Log10 pvalue | qvalue |

| count | 7,105.000 | 7,105.000 | 7,105.000 | 7,105.000 | 7,105.000 | 7,105.000 |

| mean | 0.259 | 2.475 | 0.334 | 0.259 | 2.472 | 0.336 |

| std | 0.303 | 4.536 | 0.332 | 0.301 | 5.305 | 0.328 |

| min | 0.000 | 0.000 | 0.000 | 0.000 | 0.000 | 0.000 |

| 25% | 0.003 | 0.332 | 0.013 | 0.004 | 0.343 | 0.016 |

| 50% | 0.114 | 0.943 | 0.228 | 0.123 | 0.910 | 0.246 |

| 75% | 0.465 | 2.503 | 0.620 | 0.454 | 2.409 | 0.605 |

| max | 1.000 | 57.961 | 1.000 | 1.000 | 144.895 | 1.000 |

One to one comparison of by feature:#

/tmp/ipykernel_77139/3761369923.py:2: FutureWarning: Starting with pandas version 3.0 all arguments of to_excel except for the argument 'excel_writer' will be keyword-only.

scores.to_excel(writer, 'scores', **writer_args)

| model | Median | PI | |||||||

|---|---|---|---|---|---|---|---|---|---|

| var | p-unc | -Log10 pvalue | qvalue | rejected | p-unc | -Log10 pvalue | qvalue | rejected | |

| protein groups | Source | ||||||||

| A0A024QZX5;A0A087X1N8;P35237 | AD | 0.012 | 1.907 | 0.039 | True | 0.528 | 0.277 | 0.670 | False |

| A0A024R0T9;K7ER74;P02655 | AD | 0.033 | 1.478 | 0.087 | False | 0.045 | 1.351 | 0.111 | False |

| A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | AD | 0.736 | 0.133 | 0.832 | False | 0.078 | 1.106 | 0.174 | False |

| A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | AD | 0.259 | 0.587 | 0.418 | False | 0.499 | 0.302 | 0.644 | False |

| A0A075B6H7 | AD | 0.053 | 1.278 | 0.124 | False | 0.091 | 1.040 | 0.195 | False |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| Q9Y6R7 | AD | 0.175 | 0.756 | 0.315 | False | 0.175 | 0.756 | 0.318 | False |

| Q9Y6X5 | AD | 0.291 | 0.536 | 0.455 | False | 0.096 | 1.019 | 0.203 | False |

| Q9Y6Y8;Q9Y6Y8-2 | AD | 0.083 | 1.079 | 0.178 | False | 0.083 | 1.079 | 0.182 | False |

| Q9Y6Y9 | AD | 0.520 | 0.284 | 0.667 | False | 0.452 | 0.345 | 0.604 | False |

| S4R3U6 | AD | 0.730 | 0.136 | 0.829 | False | 0.791 | 0.102 | 0.872 | False |

1421 rows × 8 columns

And the descriptive statistics of the numeric values:

| model | Median | PI | ||||

|---|---|---|---|---|---|---|

| var | p-unc | -Log10 pvalue | qvalue | p-unc | -Log10 pvalue | qvalue |

| count | 1,421.000 | 1,421.000 | 1,421.000 | 1,421.000 | 1,421.000 | 1,421.000 |

| mean | 0.283 | 1.311 | 0.368 | 0.251 | 1.400 | 0.333 |

| std | 0.302 | 1.599 | 0.325 | 0.290 | 1.600 | 0.314 |

| min | 0.000 | 0.000 | 0.000 | 0.000 | 0.000 | 0.000 |

| 25% | 0.017 | 0.310 | 0.051 | 0.012 | 0.371 | 0.038 |

| 50% | 0.171 | 0.767 | 0.309 | 0.124 | 0.907 | 0.247 |

| 75% | 0.490 | 1.760 | 0.640 | 0.426 | 1.934 | 0.583 |

| max | 1.000 | 14.393 | 1.000 | 0.999 | 21.057 | 1.000 |

and the boolean decision values

| model | Median | PI |

|---|---|---|

| var | rejected | rejected |

| count | 1421 | 1421 |

| unique | 2 | 2 |

| top | False | False |

| freq | 1069 | 1023 |

Load frequencies of observed features#

| data | |

|---|---|

| frequency | |

| protein groups | |

| A0A024QZX5;A0A087X1N8;P35237 | 186 |

| A0A024R0T9;K7ER74;P02655 | 195 |

| A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | 174 |

| A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | 196 |

| A0A075B6H7 | 91 |

| ... | ... |

| Q9Y6R7 | 197 |

| Q9Y6X5 | 173 |

| Q9Y6Y8;Q9Y6Y8-2 | 197 |

| Q9Y6Y9 | 119 |

| S4R3U6 | 126 |

1421 rows × 1 columns

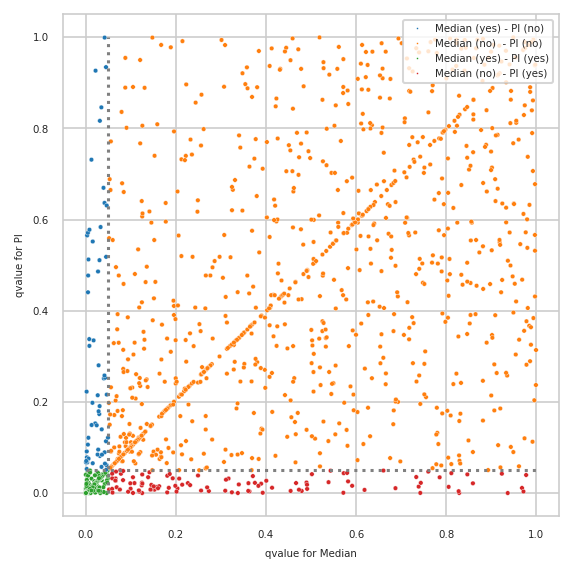

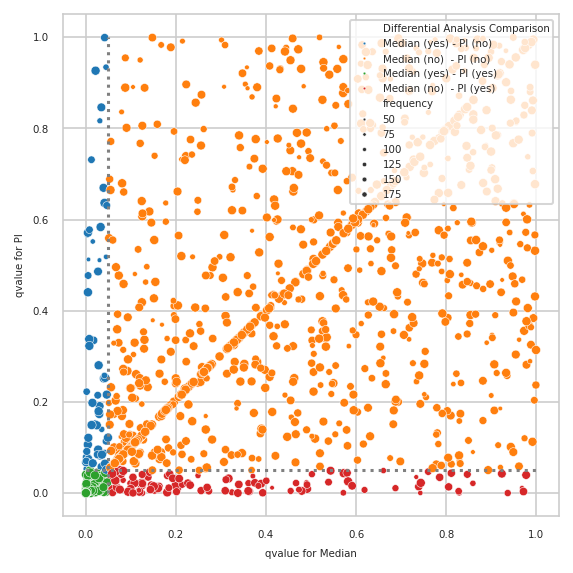

Plot qvalues of both models with annotated decisions#

Prepare data for plotting (qvalues)

| Median | PI | frequency | Differential Analysis Comparison | |

|---|---|---|---|---|

| protein groups | ||||

| A0A024QZX5;A0A087X1N8;P35237 | 0.039 | 0.670 | 186 | Median (yes) - PI (no) |

| A0A024R0T9;K7ER74;P02655 | 0.087 | 0.111 | 195 | Median (no) - PI (no) |

| A0A024R3W6;A0A024R412;O60462;O60462-2;O60462-3;O60462-4;O60462-5;Q7LBX6;X5D2Q8 | 0.832 | 0.174 | 174 | Median (no) - PI (no) |

| A0A024R644;A0A0A0MRU5;A0A1B0GWI2;O75503 | 0.418 | 0.644 | 196 | Median (no) - PI (no) |

| A0A075B6H7 | 0.124 | 0.195 | 91 | Median (no) - PI (no) |

| ... | ... | ... | ... | ... |

| Q9Y6R7 | 0.315 | 0.318 | 197 | Median (no) - PI (no) |

| Q9Y6X5 | 0.455 | 0.203 | 173 | Median (no) - PI (no) |

| Q9Y6Y8;Q9Y6Y8-2 | 0.178 | 0.182 | 197 | Median (no) - PI (no) |

| Q9Y6Y9 | 0.667 | 0.604 | 119 | Median (no) - PI (no) |

| S4R3U6 | 0.829 | 0.872 | 126 | Median (no) - PI (no) |

1421 rows × 4 columns

List of features with the highest difference in qvalues

| Median | PI | frequency | Differential Analysis Comparison | diff_qvalue | |

|---|---|---|---|---|---|

| protein groups | |||||

| Q6NUJ2 | 0.972 | 0.003 | 165 | Median (no) - PI (yes) | 0.969 |

| P22748 | 0.042 | 0.999 | 159 | Median (yes) - PI (no) | 0.957 |

| D3YTG3;H0Y897;Q7Z7G0;Q7Z7G0-2;Q7Z7G0-3;Q7Z7G0-4 | 0.969 | 0.011 | 58 | Median (no) - PI (yes) | 0.957 |

| Q6P4E1;Q6P4E1-4;Q6P4E1-5 | 0.978 | 0.040 | 178 | Median (no) - PI (yes) | 0.938 |

| P52758 | 0.937 | 0.000 | 119 | Median (no) - PI (yes) | 0.937 |

| ... | ... | ... | ... | ... | ... |

| P14621;U3KPX8;U3KQL2 | 0.042 | 0.065 | 188 | Median (yes) - PI (no) | 0.022 |

| Q6P9A2 | 0.067 | 0.047 | 168 | Median (no) - PI (yes) | 0.020 |

| Q9P2E7;Q9P2E7-2 | 0.058 | 0.042 | 196 | Median (no) - PI (yes) | 0.016 |

| A0A0A0MTP9;F8VZI9;Q9BWQ8 | 0.046 | 0.061 | 193 | Median (yes) - PI (no) | 0.015 |

| Q9BUJ0 | 0.045 | 0.051 | 185 | Median (yes) - PI (no) | 0.006 |

164 rows × 5 columns

Differences plotted with created annotations#

pimmslearn.plotting - INFO Saved Figures to runs/alzheimer_study/diff_analysis/AD/PI_vs_Median/diff_analysis_comparision_1_Median

also showing how many features were measured (“observed”) by size of circle

pimmslearn.plotting - INFO Saved Figures to runs/alzheimer_study/diff_analysis/AD/PI_vs_Median/diff_analysis_comparision_2_Median

Only features contained in model#

this block exist due to a specific part in the ALD analysis of the paper

root - INFO No features only in new comparision model.

DISEASES DB lookup#

Query diseases database for gene associations with specified disease ontology id.

pimmslearn.databases.diseases - WARNING There are more associations available

| ENSP | score | |

|---|---|---|

| None | ||

| APP | ENSP00000284981 | 5.000 |

| PSEN1 | ENSP00000326366 | 5.000 |

| APOE | ENSP00000252486 | 5.000 |

| PSEN2 | ENSP00000355747 | 5.000 |

| TREM2 | ENSP00000362205 | 4.825 |

| ... | ... | ... |

| hsa-miR-760 | hsa-miR-760 | 0.682 |

| PCDH11Y | ENSP00000355419 | 0.682 |

| JPH1 | ENSP00000344488 | 0.682 |

| RCN1 | ENSP00000054950 | 0.682 |

| RNF157 | ENSP00000269391 | 0.682 |

10000 rows × 2 columns

only by model#

Only by model which were significant#

Only significant by RSN#

mask = (scores_common[(str(args.baseline), 'rejected')] & mask_different)

mask.sum()